Big O notation tells you how an algorithm scales. It doesn’t tell you why your application is still slow, expensive to run, or crashing under real-world load. In today’s landscape of massive datasets and performance-hungry users, memory—not CPU—is often the true bottleneck. This guide moves beyond theory to deliver practical, hardware-aware memory optimization strategies you can apply immediately. You’ll see how data structure choices, allocation patterns, and cache-friendly design directly impact latency, scalability, and cloud costs. By connecting code decisions to how memory actually behaves on modern systems, this article equips you to build faster, leaner, and more resilient software.

The Hardware Contract: Why Cache Locality is King

To understand performance, you first need to understand the memory pyramid. At the top sit CPU registers (tiny, lightning-fast storage inside the processor). Next come L1, L2, and L3 caches—small but extremely fast memory layers. Finally, at the base, there’s main memory (RAM), which is far larger but dramatically slower. Each step down the pyramid increases capacity and latency.

Here’s the hard truth: a cache miss—when the CPU can’t find data in cache and must fetch it from RAM—can be hundreds of times slower than an L1 hit. According to processor latency measurements compiled by Jeff Dean (Google), L1 access can take ~1 nanosecond, while RAM may take 100 nanoseconds or more. That gap is the silent performance killer.

Some argue modern CPUs are smart enough to hide this latency automatically. To a degree, yes. However, hardware can’t fully compensate for poor data layout.

This is where spatial locality helps. When related data is stored contiguously, the CPU prefetcher pulls in nearby bytes proactively. Think arrays instead of scattered pointers.

Likewise, temporal locality means reusing recently accessed data so it stays “hot” in cache.

Use memory optimization strategies in the section once exactly as it is given

In practice, tight loops and compact structures often outperform clever abstractions (even if they look less glamorous).

Strategy 1: Data Structures for Mechanical Sympathy

Mechanical sympathy—designing software that aligns with how hardware actually works—starts with data structures. The difference between fast and sluggish systems often comes down to memory layout, not algorithms.

-

Arrays vs. Linked Lists

Iterating over contiguous array elements is dramatically faster than traversing linked lists because of spatial locality—the principle that nearby memory locations are loaded together into CPU cache lines (typically 64 bytes). When elements sit side by side, the CPU’s prefetcher accurately predicts the next access. Linked lists, by contrast, scatter nodes across memory, causing frequent cache misses. Studies in systems research consistently show array iteration outperforming pointer-chasing patterns due to predictable access (e.g., Drepper, “What Every Programmer Should Know About Memory”). -

Array of Structs (AoS) vs. Struct of Arrays (SoA)

With AoS, each object stores all its fields together. That’s intuitive—but inefficient if you only process one field at scale. SoA groups similar fields into separate arrays, allowing tighter packing and fewer wasted cache loads. Game engines and high-frequency trading systems report measurable gains when switching to SoA for vectorized workloads (think SIMD instructions crunching aligned floats like a well-rehearsed orchestra). -

Hash Table Considerations

Hash performance hinges on hashing quality and load factor (the ratio of stored elements to buckets). High load factors increase collision chaining, fragment memory, and spike cache misses. Empirical benchmarks show lookup latency rising sharply once load factors exceed ~0.75. -

Choosing Compact Data Types

Usingint16_tinstead ofint64_tcan quadruple how many elements fit in one cache line. Use memory optimization strategies. Smaller types reduce bandwidth pressure and improve throughput.

For performance-sensitive systems—like those discussed in optimizing wireless connectivity for iot devices—these structural decisions often outweigh micro-optimizations.

Strategy 2: Optimizing Data Access Patterns

Row-Major vs. Column-Major Traversal

In languages like C++ and Java, 2D arrays are stored in row-major order, meaning rows sit contiguously in memory. When you iterate row by row, the CPU pulls in a full cache line (typically 64 bytes) and uses most of it immediately. Iterate column by column, however, and you jump across memory, triggering frequent cache misses. Research from Ulrich Drepper’s What Every Programmer Should Know About Memory shows cache misses can cost 100+ CPU cycles—versus 1–4 cycles for L1 hits. That’s not a rounding error; that’s a performance cliff.

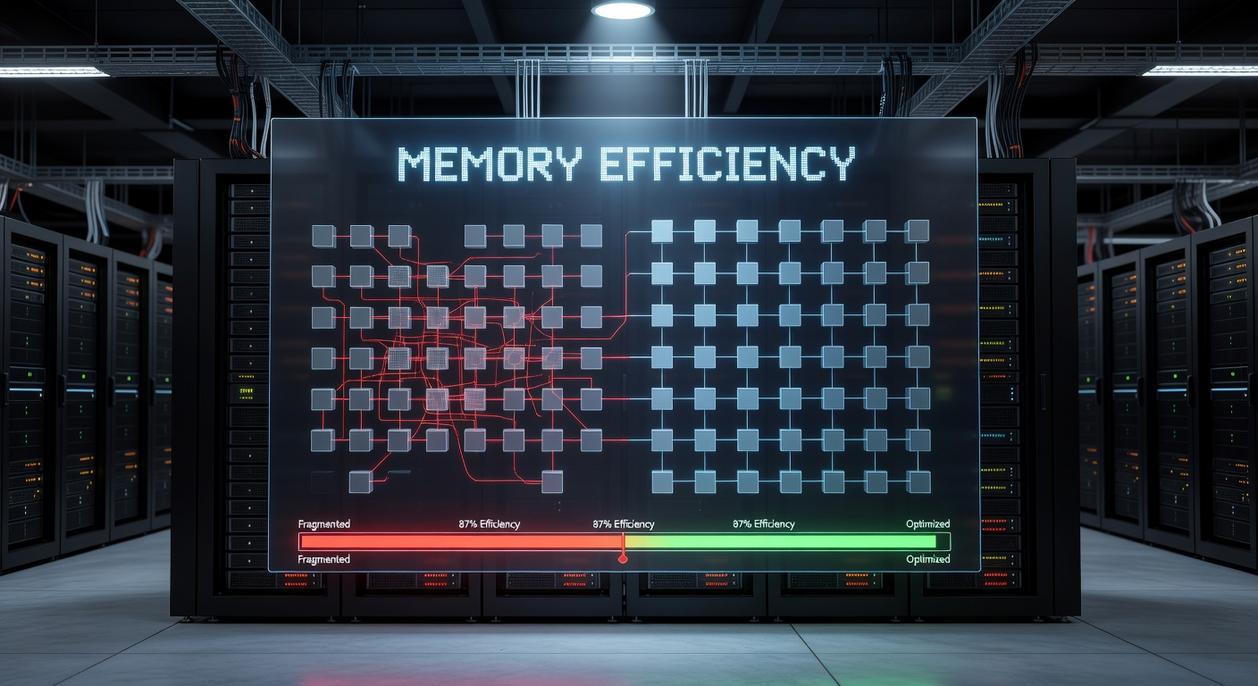

Memory Pooling

Instead of repeatedly calling malloc or new, memory pooling pre-allocates a large contiguous block and manages it manually. This reduces fragmentation and system-call overhead. Studies of high-frequency trading systems show pooled allocators can cut allocation time by over 50% in allocation-heavy workloads. (Pro tip: use pools for objects with predictable lifetimes.)

Prefetching

Modern CPUs use hardware prefetching to anticipate linear access patterns. Sequential reads enable the processor to fetch upcoming data before it’s needed, effectively hiding memory latency.

Data Alignment

Aligning structures to 64-byte cache lines prevents a single object from spanning two lines, avoiding double fetches. These memory optimization strategies collectively reduce latency and improve throughput measurably.

Strategy 3: Reducing Allocation Overhead

If you care about performance, allocation overhead (the time and CPU cost of reserving memory) should bother you. It bothers me. Too many systems lean on the heap like it’s limitless—then act surprised when latency spikes.

The Power of the Stack

Stack allocation stores short-lived data in a last-in, first-out memory region that’s extremely fast. Small structs, temporary buffers, loop counters—keep them there. Heap allocation, by contrast, invites garbage collection pauses (and yes, users notice).

Object Reuse

Creating objects inside tight loops is like buying a new coffee mug every morning instead of washing one. Reuse a single instance, reset its fields, move on. Less churn. Fewer collections.

- Allocate once, reuse often

- Reset state instead of reallocating

Value Types vs. Reference Types

Value types are stored inline; reference types require indirection and heap tracking. That extra pointer hop adds up.

Some argue modern runtimes handle this fine. I disagree—smart memory optimization strategies still win in real workloads.

From Theory to Practice: A Memory-First Mindset

You set out to understand how real performance gains happen—and now you’ve seen that true optimization starts with respecting the hardware. When software ignores memory layout and access patterns, even the most elegant algorithms become slow and unpredictable. That pain compounds as systems scale.

The shift is practical: prioritize data structures and access patterns that enhance cache locality, and validate decisions with a memory profiler. Start small. Identify one hot loop in your current project, apply a targeted change, and measure the impact.

Performance bottlenecks won’t fix themselves. Take action today—profile, optimize, and build software that’s measurably faster and built to scale.